The problems of Search Engine Optimization are usually considered to be the problems of the marketing division of the company, and system administrators or software developers almost always have no clue when what they do causes significant troubles for website marketing.

Today we will talk about one of the biggest problems you may encounter in Search Engine Optimization – the content duplication problem, and what you, as web developer, system administrator or website owner, should do to prevent it.

Content duplication is the situation, when the content of your page, or part of it, can be found in various places in the internet. Imagine, that you created an article, and someone just copied it to his website. Now imagine, that search engine decides that this article is the best answer to someone’s query. But there are multiple copies of that article in the Internet. What would it do?

More often than not, the search engine would return the URL of the originating article. First of all, the search engine remembers when this article was indexed. According to logs of my blog, Google is visiting me every 15 minutes. So after about 15 minutes after the publication, I don’t care much about the content theft. This also means, that for the “thief” copying of the article won’t bring anything good from Google.

Another way how search engine would define the originator is the link to the original article found on copy page. So, if you copied the article and left the link to the original page, you’re clean and won’t have troubles with either Google or the author, but most likely it won’t bring much traffic as well – your position in SERP (Search Engine Results Page) will be lower.

Now, where is the problem?

The problem lays in the fact, that copies might belong to you. They even could represent the one and the same file. I’ll give you an example.

As a system administrator, you are hosting the website. Like www.example.com. You have e-mails ending with example.com and you can be pretty sure people will enter that address into the address bar of their browser. So what do you do? Right, you set up the binding of the website, so it serves both www.example.com and example.com. And maybe even some other name. Even “better” is when your website is serving whatever name – if it simply responds to the IP address and port and ignores the website name.

Now, according to Google and common sense, www.example.com and example.com (I will call it parent for simplicity) are two different domain names, meaning they could be served by different web servers. The Page rank collected by www domain won’t be shared with the parent, and vice versa. Even if you will share only the “example.com” address, there will be users who will type “www.” and will share that address with others, so it will be indexed and collect the page authority. As the result, the Page rank and other authority-based factors will be divided among the “two” resources.

Some system administrators solve that problem by weird and inefficient way – they only enable either www or parent domain. This causes confusion, when someone tries to navigate to domain using the address which looks perfectly normal, excerpt that it’s not served by web server.

When you have two domains, the weird, but correct solution is to create two websites, where one will redirect to another using the HTTP 301 redirect directive (more about it later).

If you are using web hosting provider, usually when you are creating a website for a 2nd level domain name, it automatically creates a website, which serves both www and parent domain. In that case, you will have duplication problem. However, all hosting providers will allow you to create a website for parent domain and then create a subdomain (or 3rd level domain) for “www.” and instruct hosting provider to redirect all requests to parent domain.

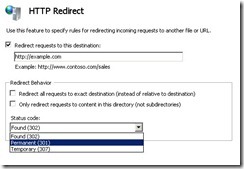

In case you own the server, you’ll have to create a new website serving the “www.” subdomain and redirect all requests to the parent domain using HTTP 301 directive. That’s how it looks in Windows Internet Information Server, the default web server for Windows:

As you can see, everything is straightforward – you set the address, the redirection method, and web server even will be so kind to redirect to the same page on target server, meaning that https://www.example.com/mypage.htm will be redirected to https://example.com/mypage.htm. Quite handy.

So, if you are the system administrator of the web server and your website is going to be indexed with search engines (meaning you don’t have robots.txt declining the indexing), you should not ever create one website which serves more than one public name.

Duplicating pages

Sometimes website owners create either page copies or rules that show the same page under different names. This website is not an exception – each article has a unique name based on GUID (globally unique identifier) and SEO-friendly name which looks like the title of the page. The problem is – the page is accessible by two names and for search engine they are two pages which duplicate each other. So if half of visitors will link to this page using the GUID version of the address and half will link using the human-friendly one, the page will only get half of Page rank. Actually – it will be split among “two” pages.

Moreover, “index.htm” and “index.html” are different names, although sometimes there are rules made in website configurations that would consider to serve both extensions if at least one file exists, just to not loose the visitor. You can do that, but only if your method uses HTTP 301 directive.

The 301 redirect is a permanent redirection directive. When using it, the search engine will remove the old page from the index and replace it with the new one, the target of redirection. That way, the rank of the old resource will be successfully transferred to the new one. Comparing the 301 redirect to 302 directive, the difference is such, that although search engine will follow the redirection, it will not index the target page and so the rank will stay with the old resource. And if you think about redirection using the meta refresh tag or JavaScript redirection – just forget it, as search engine bot doesn’t follow these two, even though Google is running JavaScript scripts now.

As a side note - when you are decommissioning your old website, you should switch on the 301 redirection to another site of yours for at least one year.

If your page is hosted on Windows, it has case insensitive name. That means if you will request “Index.htm” it will return you the contents of the “index.htm”, even though there are different characters in the file name – i and I characters have different ASCII codes, and in operating systems other than Windows these file names would be considered different and you wouldn’t get the same result.

So, if you have Windows environment, avoid using URLs which are easier to read, but are not exact representation of the file name. For example – if your page is “getmoreinformation.aspx”, don’t link to it as to “GetMoreInformation.aspx”, even though it will return the same result. Results of these two requests will be indexed separately.

Why Google doesn’t figure it out?

Why Google doesn’t assume that two pages on the same website with the same content are actually one page? Because, you know, you could change it later, after the page is indexed. If you have, say, two pages – “index1.htm” and “index2.htm” which give the same result, if Google would share the authority index among them, and you would then make it that index2.htm would show different contents, it would still have the higher rank than it should. That would be an easy way to boost the rank of bogus pages or even domains.

The same goes for “www.” domain – although it’s a usual practice that parent domain and www are pointing to the same place, since it’s not a rule and you can change it any moment, Google won’t make that assumption.

So, although it looks weird and unpleasant (meaning – you’ll have some additional manual work instead of using simple functionality provided by web servers), that’s the right way, and Google is not to blame.

Developing non-duplicating websites

If you are a software developer, there are few considerations you should take as the best practices.

First of all – consider using the “canonical” tag. Just like the meta tag, it should be placed in the header of your page (the “<HEAD>” tag, you know). It looks like this:

<link rel=”canonical” href=”https://example.com/mypage.htm” />

Since all of your pages should display only substantially different content, consider putting this tag into the pages which contain the source of information. For example – this blog. As you may notice, when you are browsing categories or tags or months, you see the list of articles. And they have “More” link in the list, which leads to the complete content of the article. If the content wouldn’t be cut, you would see complete articles, flowing one after another. And that would create duplicate content.

The problem here is that when someone would search for something where the article would represent the best answer, most likely the search engine would consider the index page as the result, instead of the article page. So you would be redirected to the index page, which would look different since the moment of indexing, so you wouldn’t guess why Google redirected you here.

Solution to that is either not displaying most part of page content anywhere else, or placing the canonical tag in the article page. The href part of the tag should contain the complete URL to the page. The aggregation page shouldn’t have the canonical tag then.

I would advise to assemble the canonical tag from your web application during the runtime, but allow system administrator to state the host name in the configuration file. So for each page you would concatenate the host name from the configuration file and path of the current page.

Parameters

To make things worse, the parameters you have after the page name, form the unique URL as well. That means that example.com/shop.php?category=notebooks and example.com/shop.php?category=cars are two different pages. Well, in fact they are presenting you different information, as you may guess from the value of the category parameter, but in this example they are served by one page – shop.php. Make sure that having different parameters won’t produce the same content.

Actually, SEO has one rule you should take into consideration – if it looks like a page, it should have it’s own page address. I don’t say a separate page, because you could take the result from the same server side script, but using the url rewriting techniques you can make it look as a separate page – for both human visitors and search engine bots.

In fact, don’t use parameters at all. Resources with a "?" in the URL are not cached by some proxy caching servers. Remove the query string and encode the parameters into the URL.

Resume

Different trades blend whimsically and we have to adjust the rules of our own games, sacrifice the simplicity for a better future of the project. We have to wear many hats or at least check with the bearers of that hats, to make our website not only technologically perfect, but also flawless from the marketing perspective, as even the best captain won’t win the sea fight on a fishing boat.